I received my B.S. degree in Statistics from Wuhan University of Technology (WHUT, 武汉理工大学). Currently, I am a Ph.D. candidate in Computational Mathematics at the School of Mathematics, South China University of Technology (SCUT, 华南理工大学), advised by Prof. Delu Zeng. I also collaborate with researchers at SCUT (Junmei Yang, Min Chen, Jiacheng Li, Shigui Li), RIKEN-AIP (Qibin Zhao, Jian Xu, Zerui Tao, Yuning Qiu, Chao Li), Columbia University (John Paisley), University of Waterloo (Zhou Wang), Tsinghua University (Shian Du), and Shanghai Jiaotong University (Wenjing Lu).

My research focuses on probabilistic modeling and generation, including deep generative modeling, density ratio estimation (DRE) and LLM post-training, with particular interests in diffusion models, normalizing flows, and stochastic interpolation. I aim to develop mathematically grounded methods for probabilistic inference. Recently, I am also interested in applying DRE to post-training (LLM alignment, preference optimization) for trustworthy and safe LLM. I have published papers at top AI conferences (ICLR, NeurIPS, ICML, CVPR) and journals (IEEE T-IM, PR, ESWA, IoTJ, Neurocomputing).

I also serve as a reviewer for JMLR, ICML, NeurIPS, ICLR, CVPR, ECCV, AAAI, UAI, ACM MM, IEEE T-MM, IEEE T-ETCI, Internet of Things Journal (IoTJ), Expert Systems with Applications (ESWA)…

Feel free to reach me at 📧 weichen.work@qq.com / weichen001.work@foxmail.com.

🔥 News

- 2026.05: Our paper about disentangled preference optimization is accepted to ICML 2026. [Paper ↓]

- 2026.01: Our paper about minimum path variance principle for DRE is accepted to ICLR 2026. [Paper ↓]

- 2025.10: Our paper about diffusion informer for time series modeling is accepted to Expert Systems With Applications (ESWA). [Paper ↓]

- 2025.10: Our paper about wavelet diffusion for time series modeling is accepted to IEEE T-IM. [Paper ↓] News🎉

- 2025.09: Our paper about diffusion modeling acceleration is accepted to NeurIPS 2025. [Paper ↓] News🎉

- 2025.09: Our paper about normalizing flow is accepted to Pattern Recognition (PR). [Paper ↓]

- 2025.08: Our paper about diffusion models for low-level CV is accepted to Neurocomputing. [Paper ↓]

- 2025.05: Our paper about stable & efficient density ratio estimation is accepted to ICML 2025. [Paper ↓]

- 2022.02: Our paper about efficient continuous normalizing flow is accepted to CVPR 2022. [Paper ↓]

📝 Publications

Deep Generative Modeling

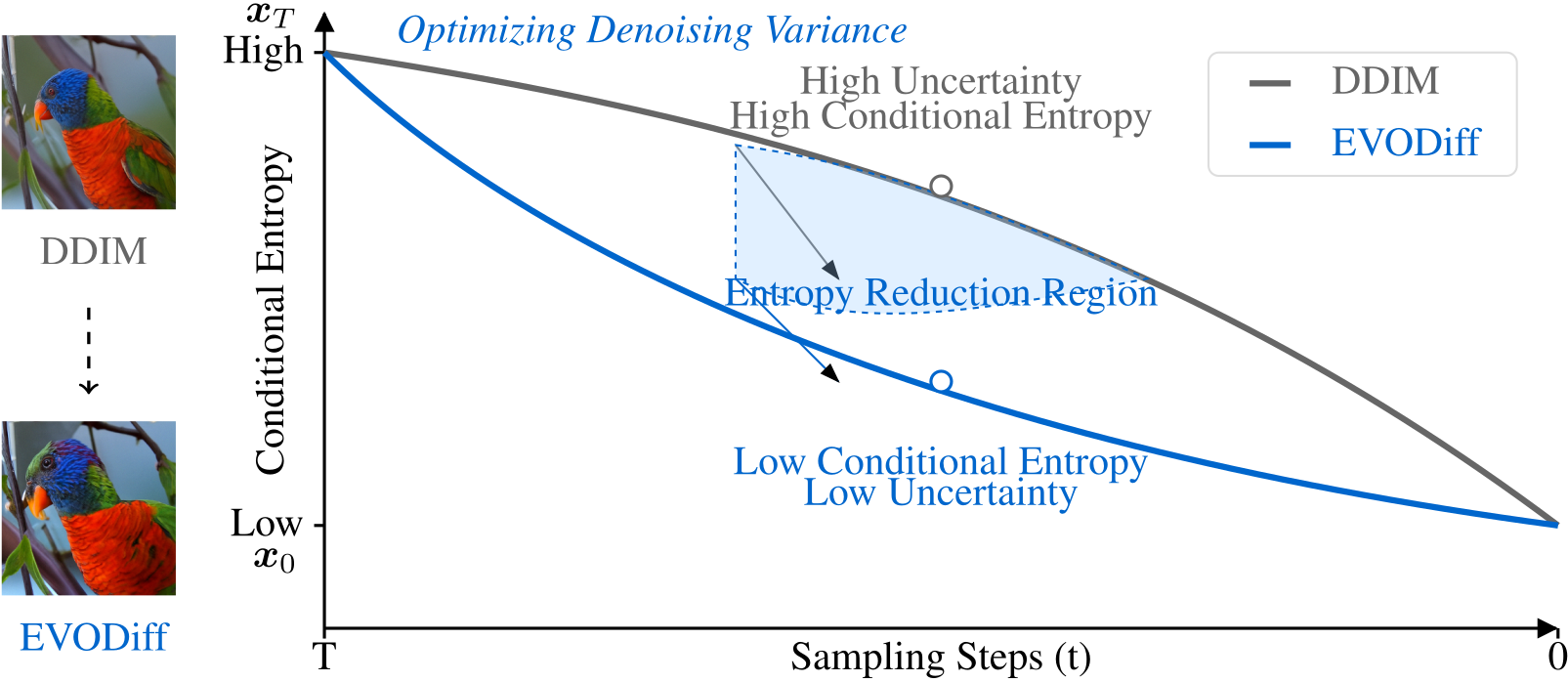

EVODiff: Entropy-aware Variance Optimized Diffusion Inference, Shigui Li, Wei Chen, Delu Zeng* [Bib]

NeurIPS 2025 | Paper | Code | News🎉

- Proposes EVODiff, a fast inference method for diffusion models that optimizes conditional entropy during denoising.

- Generates higher-quality images with fewer steps (e.g., 25% fewer steps on ImageNet-256) while significantly reducing artifacts.

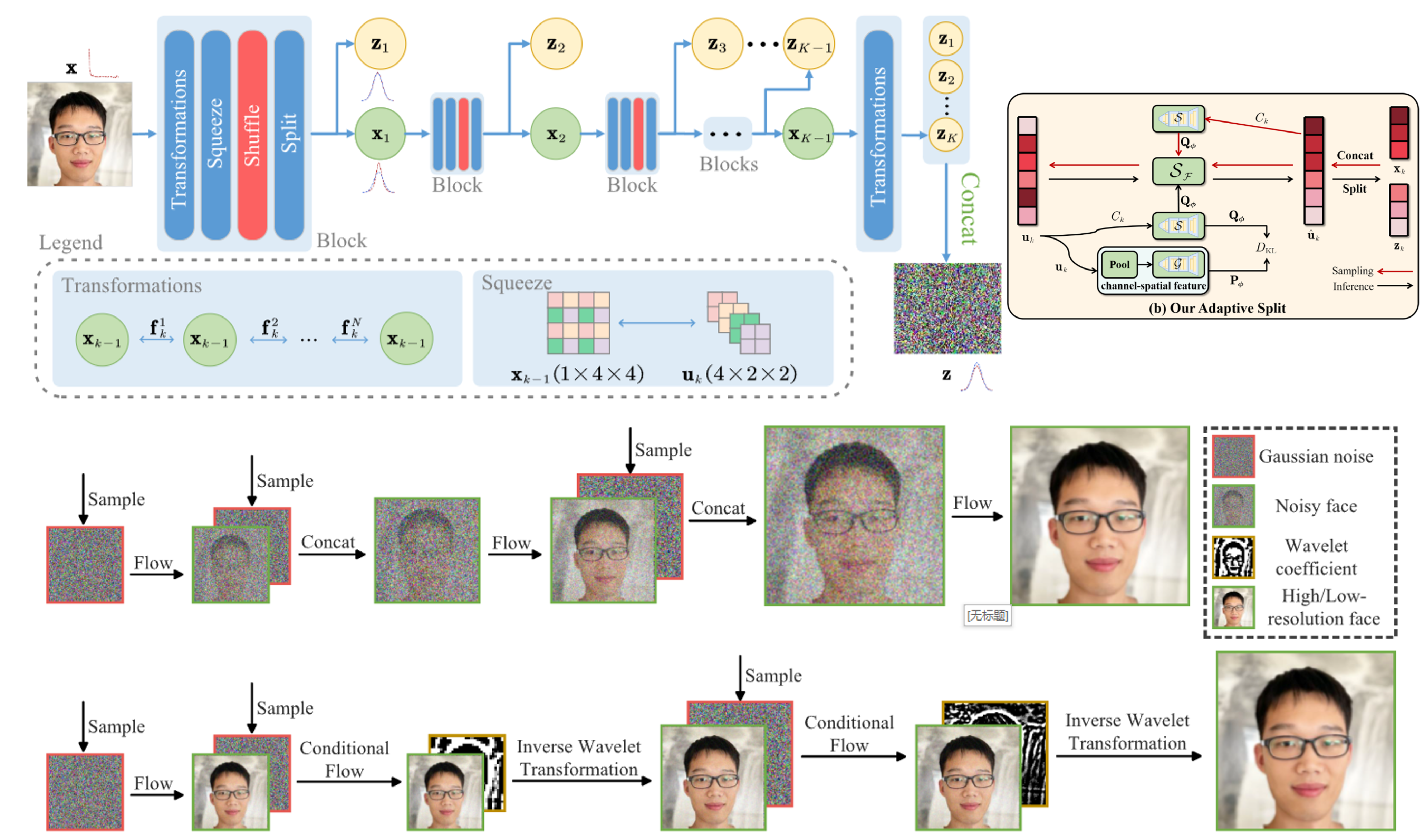

Entropy-informed weighting channel normalizing flow for deep generative models, Wei Chen#, Shian Du#, Shigui Li#, Delu Zeng*, John Paisley [Bib]

Pattern Recognition (PR) 2025 | Paper | Code

- Proposes EIW-Flow, which adaptively assigns channel-wise weights and shuffles latent variables in normalizing flows.

- Achieves state-of-the-art density estimation on CIFAR-10, CelebA, and ImageNet with negligible extra cost.

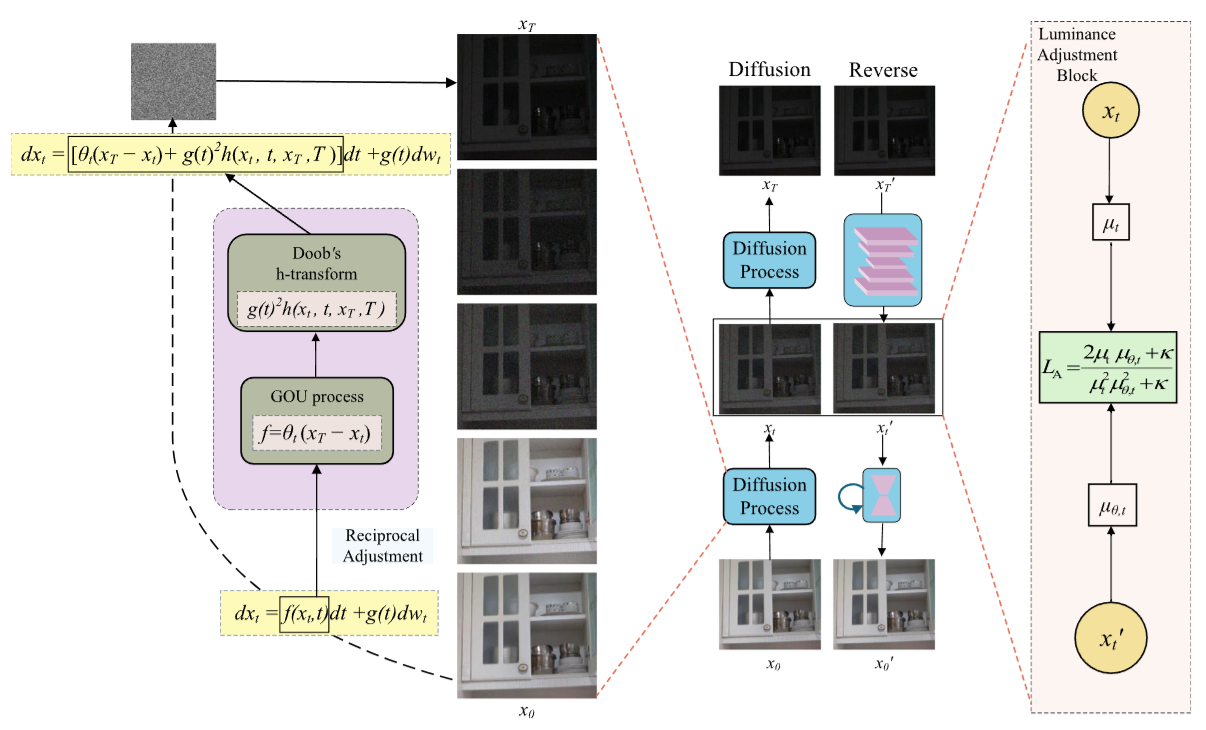

ReciprocalLA-LLIE: Low-light image enhancement with luminance-aware reciprocal diffusion process, Zhiqi Lin, Wei Chen, Jian Xu, Delu Zeng*, Min Chen [Bib]

Neurocomputing 2025 | Paper

- Proposes a reciprocal diffusion process within DDPM that iteratively enhances low-light images.

- Introduces a Luminance Adjustment Block for robust global brightness control, recovering details in dark regions.

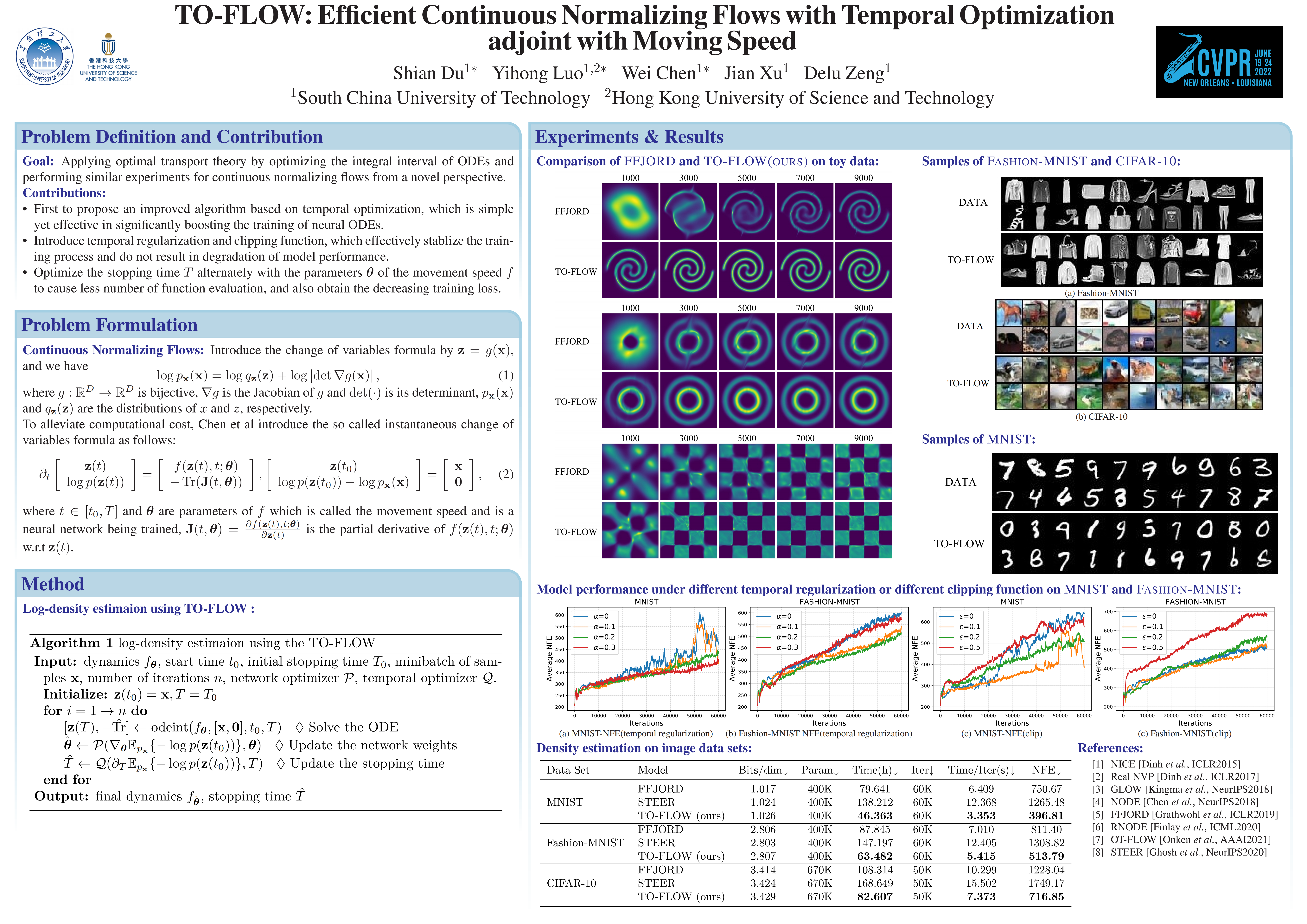

To-Flow: Efficient Continuous Normalizing Flows with Temporal Optimization Adjoint with Moving Speed, Shian Du#, Yihong Luo#, Wei Chen#, Jian Xu, Delu Zeng* [Bib]

- Proposes To-Flow, which optimizes the evolutionary time of neural ODEs via coordinate descent to speed up continuous normalizing flow training.

- Accelerates training by ~20% without sacrificing generation quality, and is compatible with existing regularization methods.

LLM/MLLM Post-Training

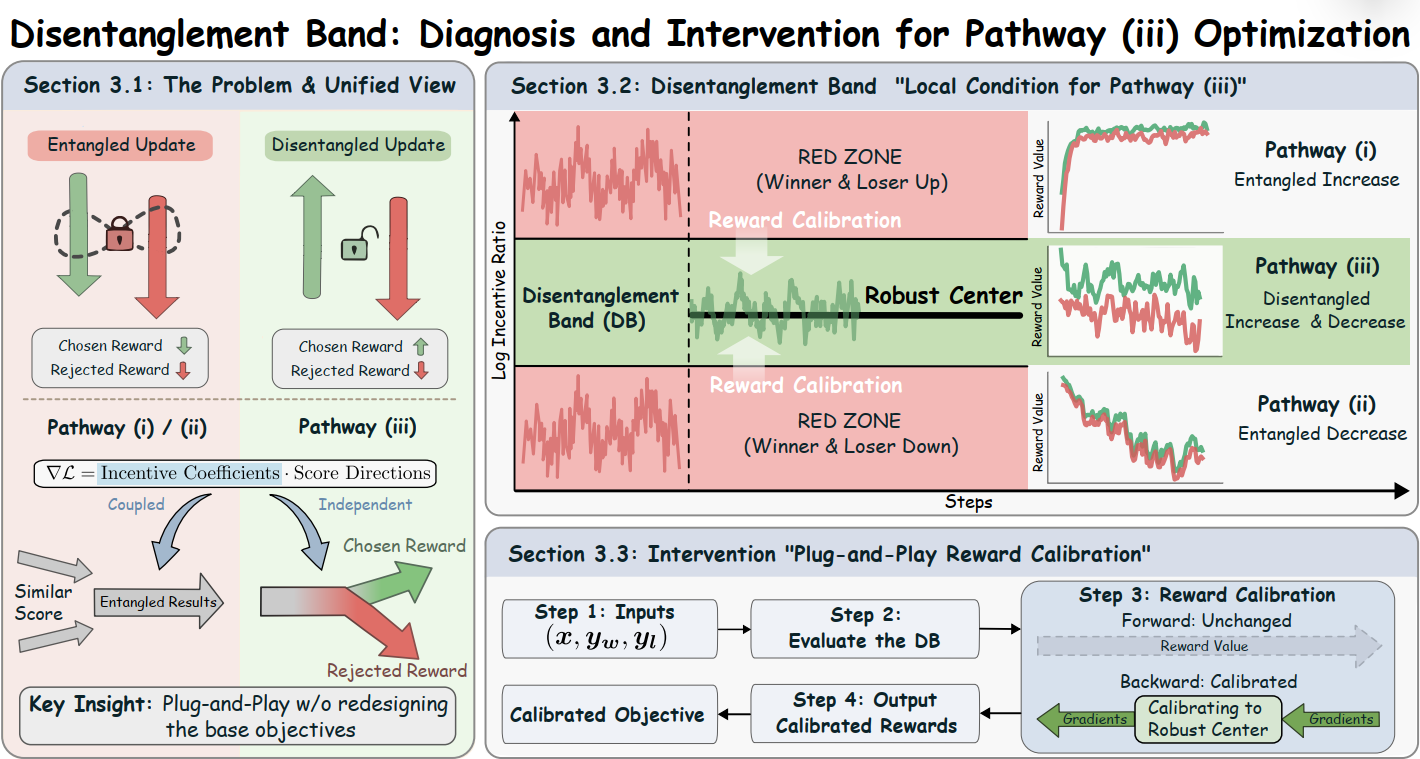

Towards Disentangled Preference Optimization Dynamics: Suppress the Loser, Preserve the Winner, Wei Chen, Yubing Wu, Junmei Yang, Delu Zeng*, Qibin Zhao, John Paisley, Min Chen, Zhou Wang [Bib]

- Reveals that diverse preference optimization objectives share the same update direction and differ only in two scalar weights, and identifies a simple condition (disentanglement band) for the desired learning pathway.

- Proposes a plug-and-play reward calibration method that rebalances updates to stay within this band, improving LLM alignment without modifying the base objective.

Density Ratio Estimation

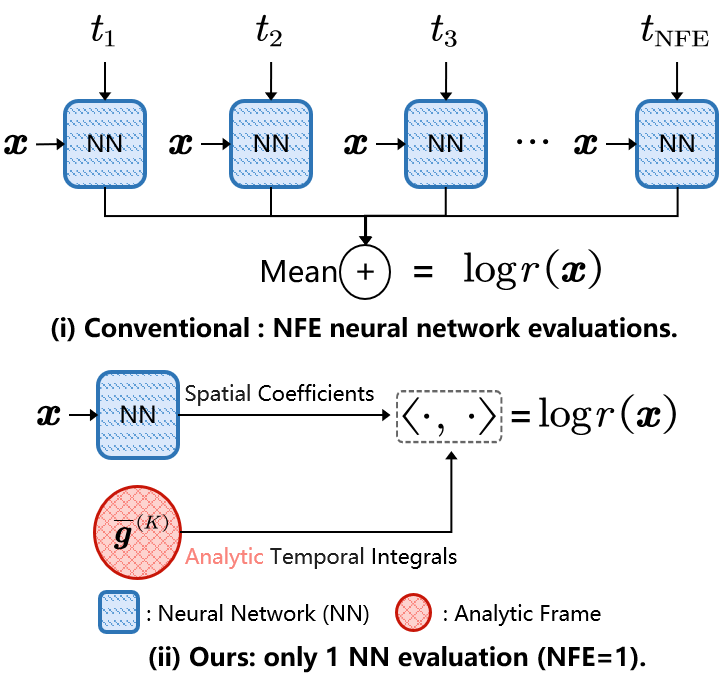

One-Step Score-Based Density Ratio Estimation, Wei Chen, Qibin Zhao, John Paisley, Junmei Yang, Delu Zeng* [Bib]

- Proposes OS-DRE, a solver-free framework that decomposes the time score into spatial and temporal parts, with the temporal part solved analytically.

- Enables accurate density ratio estimation with only one function evaluation, combining the speed of classical methods with the accuracy of score-based approaches.

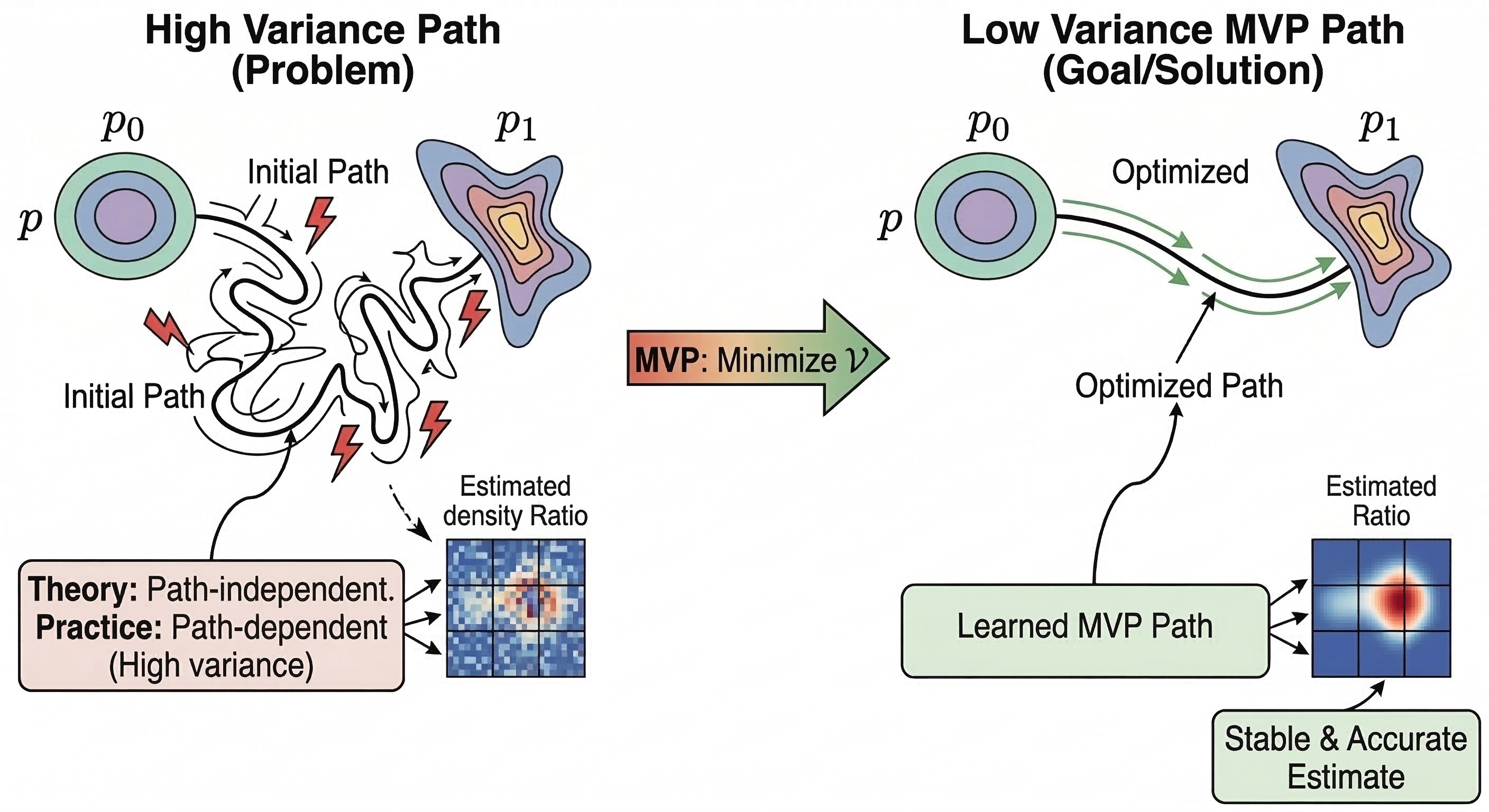

A Minimum Variance Path Principle for Accurate and Stable Score-Based Density Ratio Estimation, Wei Chen, Jiacheng Li, Shigui Li, Zhiqi Lin, Junmei Yang, John Paisley, Delu Zeng* [Bib]

- Resolves the path-dependence paradox in score-based methods by identifying the overlooked path variance term in training objectives.

- Derives a closed-form variance expression and learns optimal, data-adaptive interpolation paths automatically, achieving state-of-the-art DRE accuracy.

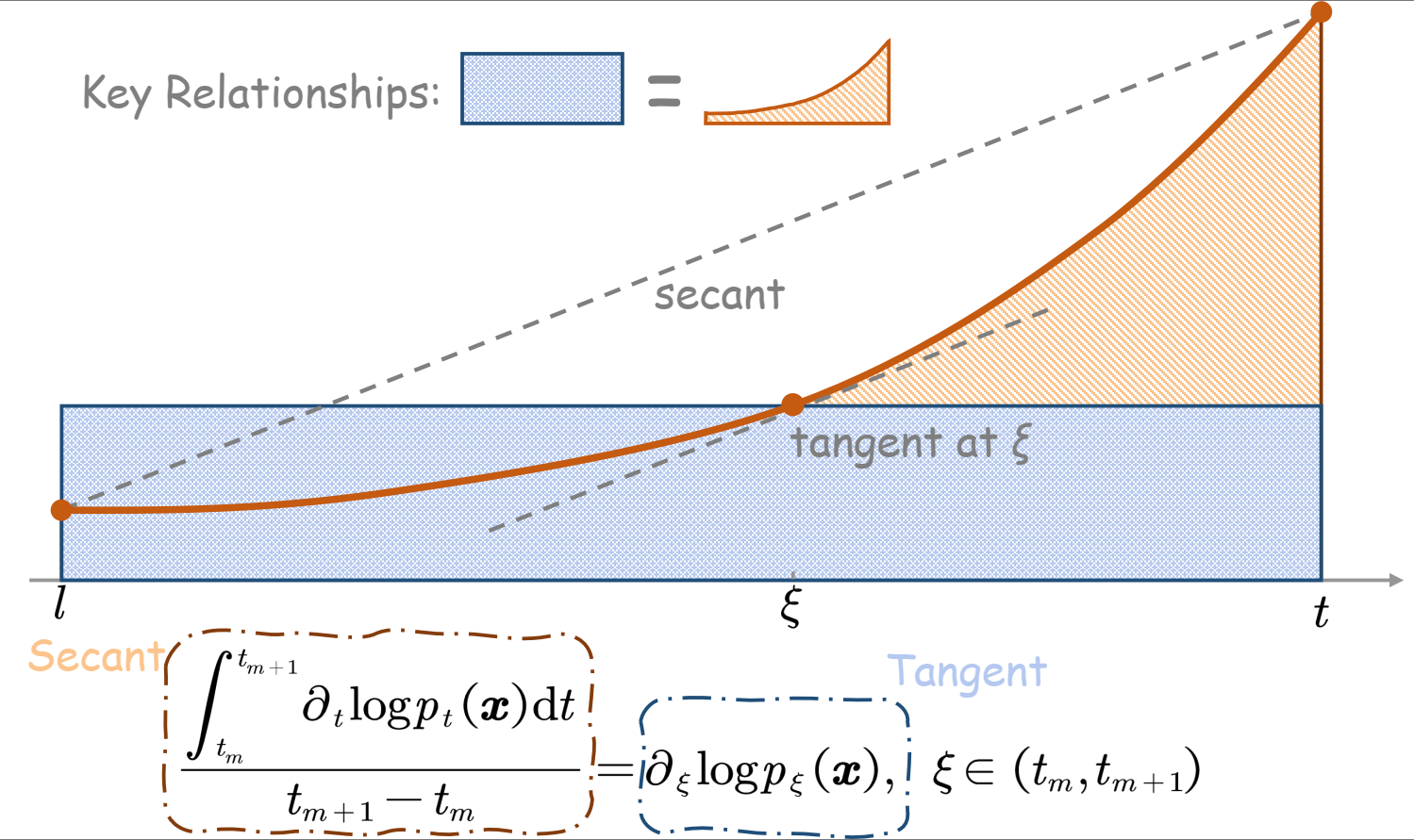

Diffusion Secant Alignment for Score-Based Density Ratio Estimation, Wei Chen, Shigui Li, Jiacheng Li, Jian Xu, Zhiqi Lin, Junmei Yang, Delu Zeng*, John Paisley, Qibin Zhao [Bib]

- Replaces high-variance tangent-based learning targets with their interval integral (secant), which is provably lower-variance and smoother for neural networks to learn.

- Achieves accurate density ratio estimation with fewer function evaluations, and handles large distribution discrepancies more robustly than prior methods.

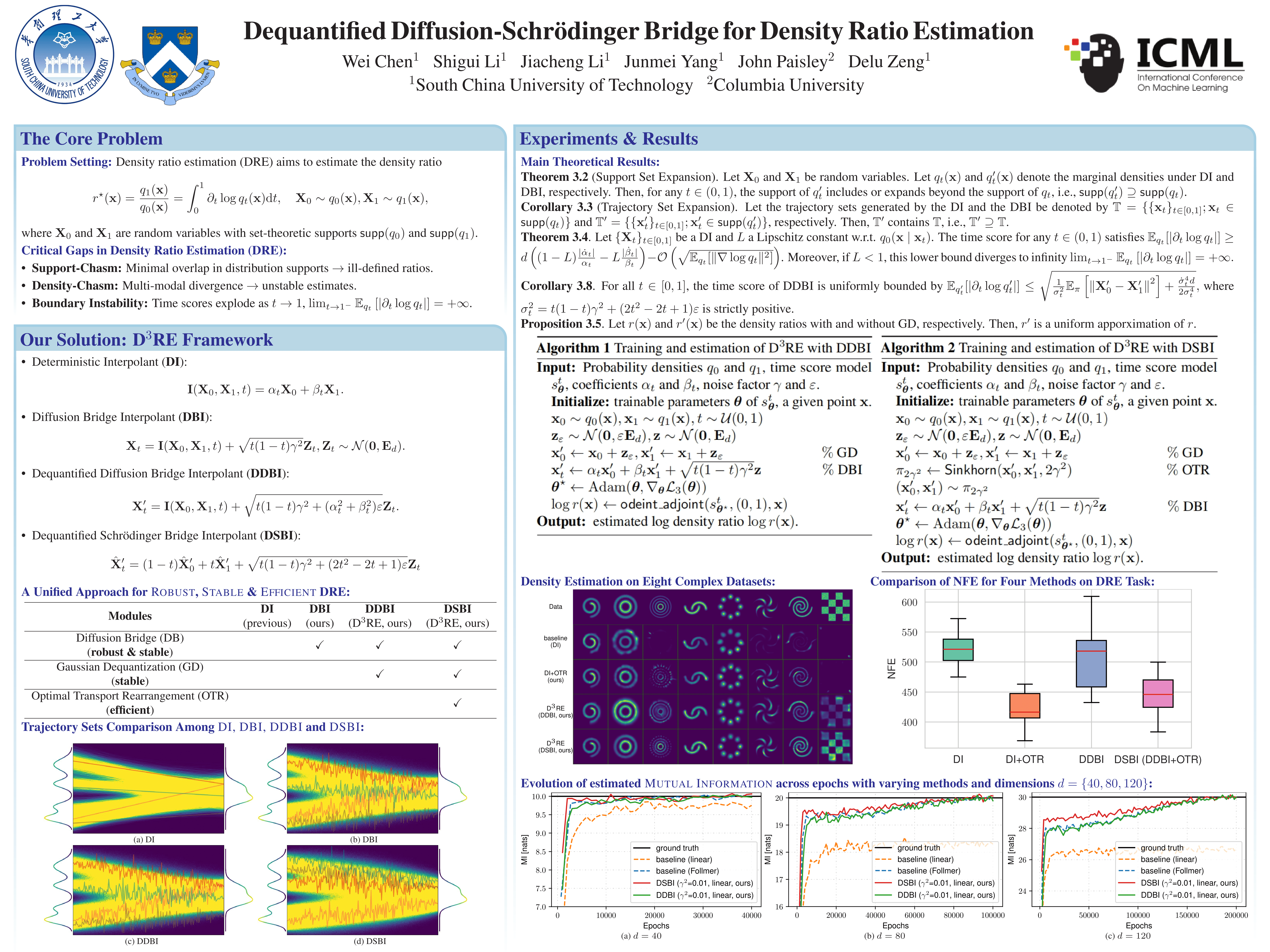

Dequantified Diffusion-Schrödinger Bridge for Density Ratio Estimation, Wei Chen, Shigui Li, Jiacheng Li, Junmei Yang, John Paisley, Delu Zeng* [Bib]

- Proposes D3RE, a unified framework that handles the density-chasm and support-chasm problems where existing methods fail.

- Combines diffusion bridges with optimal transport to expand support coverage and stabilize score learning, enabling robust estimation even for very different distributions.

Time Series Forecast

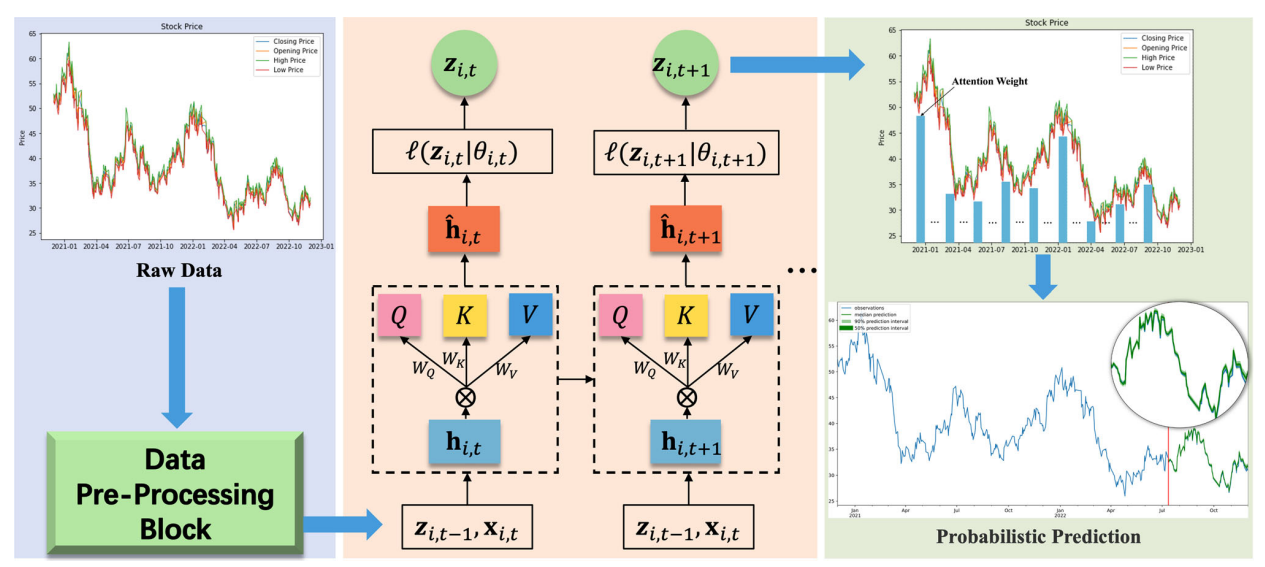

DeepAR-Attention probabilistic prediction for stock price series, Jiacheng Li, Wei Chen, Zhiheng Zhou, Junmei Yang, Delu Zeng* [Bib]

Neural Computing and Applications 2024 | Paper

- Proposes DeepAR-Attention, combining DeepAR with attention mechanisms for probabilistic stock price forecasting.

- Captures complex temporal dependencies and provides uncertainty-aware predictions for financial time series.

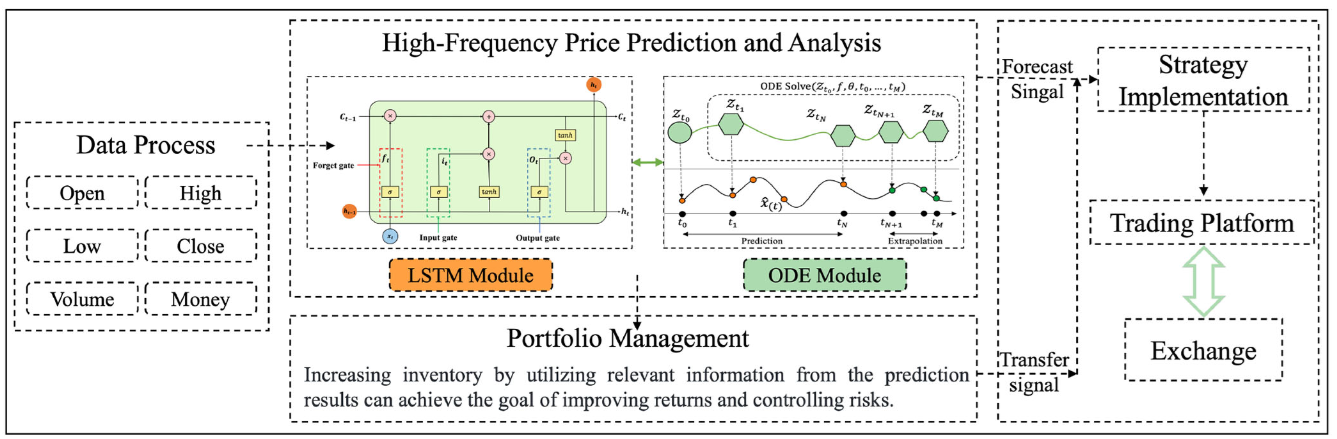

Neural ordinary differential equation networks for fintech applications using IoT, Jiacheng Li, Wei Chen, Yican Liu, Junmei Yang, Delu Zeng*, Zhiheng Zhou [Bib]

IEEE Internet of Things Journal (IoTJ) 2024 | Paper

- Develops neural ODE network approaches that model continuous-time dynamics for fintech applications in IoT.

- Captures irregularly sampled financial data more naturally than discrete-time models.

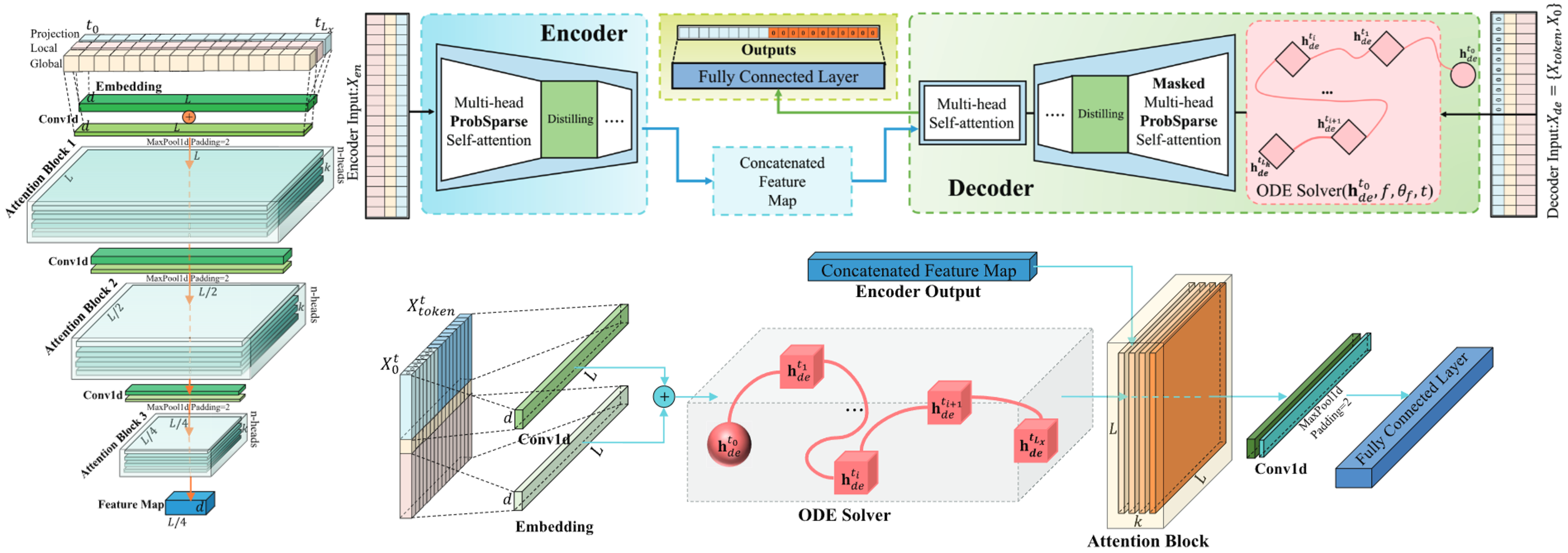

Integrating Ordinary Differential Equations with Sparse Attention for Power Load Forecasting, Jiacheng Li, Wei Chen, Yican Liu, Junmei Yang, Zhiheng Zhou, Delu Zeng* [Bib]

IEEE Trans on Instrumentation and Measurement (T-IM) 2025 | Paper

- Proposes EvolvInformer, which integrates neural ODE solvers with sparse attention for long-sequence power load forecasting.

- Reduces forecasting error by 29.7% (MSE) while maintaining logarithmic memory complexity.

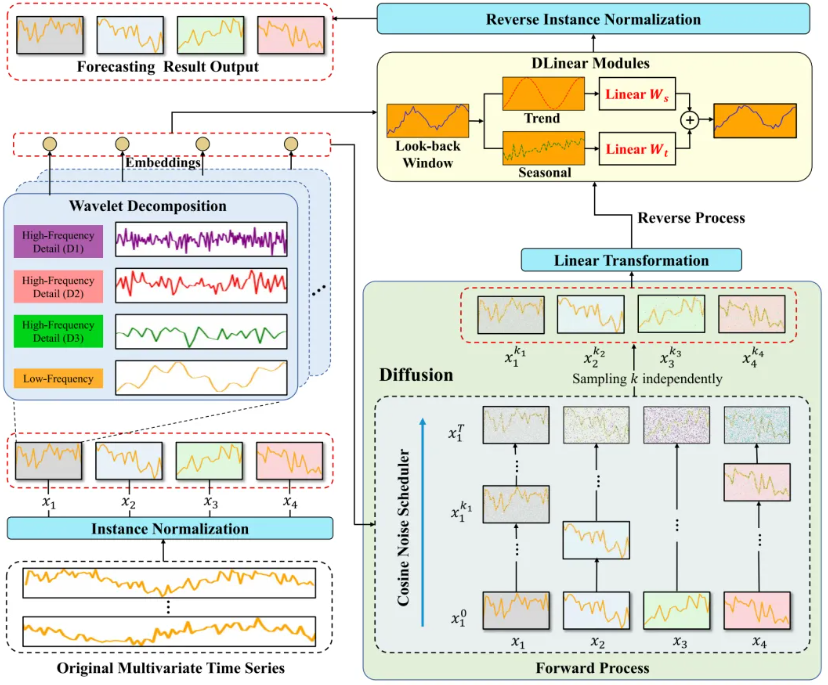

Generative Self-Supervised Time-Series Forecasting Leveraging Wavelet Diffusion, Jiacheng Li, Wei Chen, Yican Liu, Junmei Yang, Zhiheng Zhou, Delu Zeng* [Bib]

IEEE Trans on Instrumentation and Measurement (T-IM) 2025 | Paper | News🎉

- Proposes TimeWaveDiff, a lightweight self-supervised framework that combines wavelet decomposition with diffusion modeling for time series.

- Captures multi-scale periodic patterns and achieves superior long-term forecasting accuracy with significantly lower computational cost.

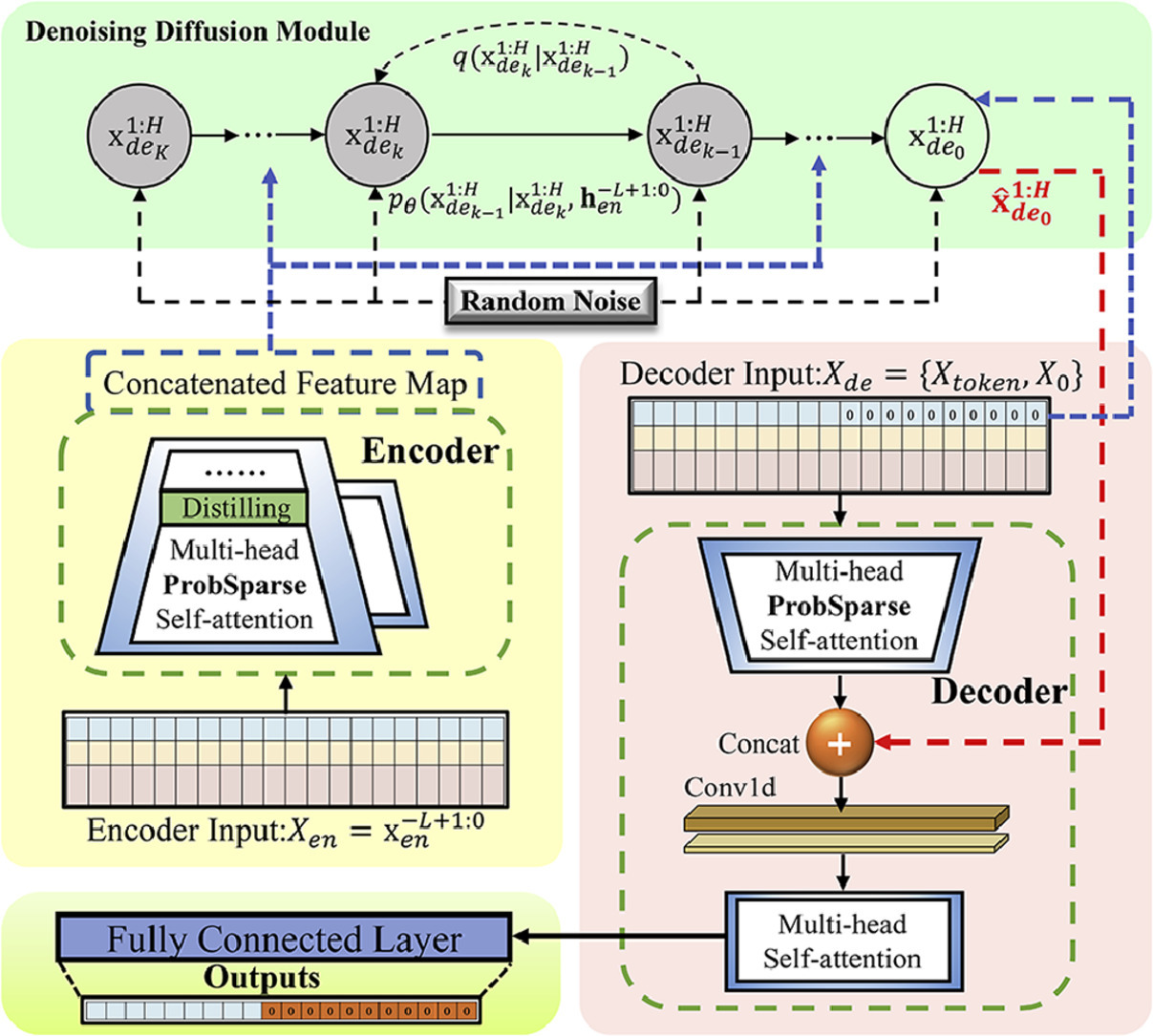

Diffinformer: Diffusion Informer model for long sequence time-series forecasting, Jiacheng Li, Wei Chen, Yican Liu, Junmei Yang, Zhiheng Zhou, Delu Zeng* [Bib]

Expert Systems with Applications (ESWA) 2025 | Paper

- Proposes Diffinformer, which combines conditional diffusion models with Informer’s efficient sparse attention for long-sequence time series forecasting.

- Achieves consistent improvements over existing methods across five large-scale real-world datasets.

🎖 Honors and Awards

- 2021.10 — None

📖 Educations

💬 Invited Talks

💻 Internships